Containerization with Docker-3

Docker-Swarm & Docker-Stacks

What is Docker-Swarm?

Docker Swarm is a native clustering solution for Docker. It turns a pool of Docker hosts into a single, virtual Docker host.

It is a tool for creating and managing a cluster of Docker nodes. It allows users to create a swarm(a bunch) of Docker engines, and then deploy and manage services across the swarm. It provides features such as service discovery, load balancing, and rolling updates. It is intended for production use and is a more robust solution for scaling and managing large-scale applications.

Why Docker-Swarm

As we are all aware of running docker containers and hosting our application inside it, however, there is a downside to it. Docker containers always stay exposed which means anyone who is accessible to the server can perform docker ps and can get the container id and can perform docker kill and the container is dead.

Due to the above feature of docker containers, it is not directly used in production. So, to fill this gap we have the tool Docker-Swarm. It has the Auto Healing capability which removes the fear of container termination, whenever any container is terminated or killed due to any external or internal reason, it makes the container live again.

NB: You do not need to install docker swarm explicitly, it comes as native however if you are going with Kubernetes(A similar tool as Docker Swarm) you need to install it explicitly.

Docker-Swarm Architecture

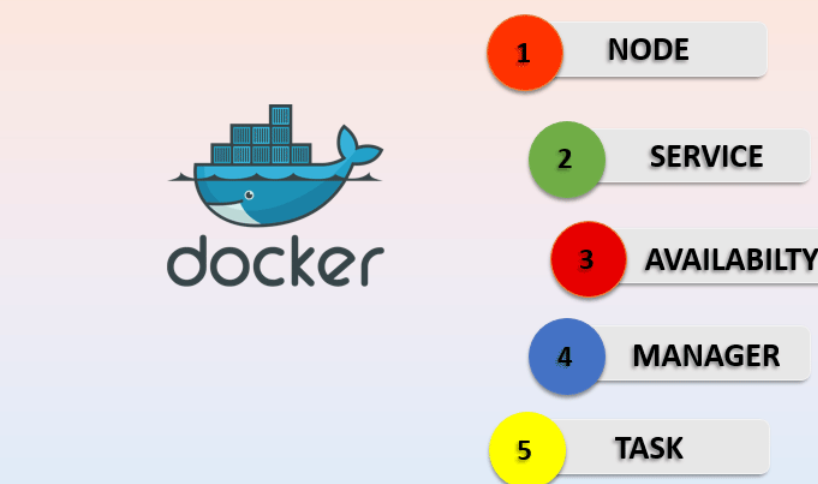

Docker Node: It is the Docker Engine instance included in Docker swarm, has two kinds:

Manager Node: Responsible for all orchestration and container management tasks required to maintain the system in the desired state such as maintaining the cluster state, scheduling the services and servicing the swarm mode HTTP endpoints. It not only manages other nodes, it also runs a container itself as well.

Worker Node: There will be multiple worker nodes and only one manager node. The responsibility of these worker nodes is only to execute the task given by the manager node.

Task: They are the docker containers that execute commands.

Docker Service: It is the task definition that needs to be executed.

Hands-on Illustration of end to end App deployment through Docker-Swarm

Step-1(Lunching required instance)

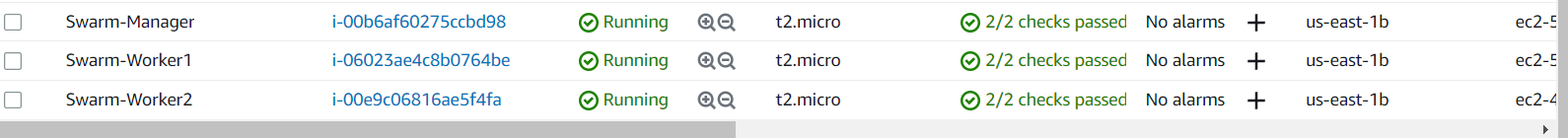

Create at least 3 or more EC2 instances naming one of them as Swarm-Manager (you can keep names of your choice but make sure you can differentiate).

docker --version : make sure docker is installed in all the servers else install them by sudo apt install docker.io -y .

Until now we have just pinned up servers but the swarm is not yet initiated.

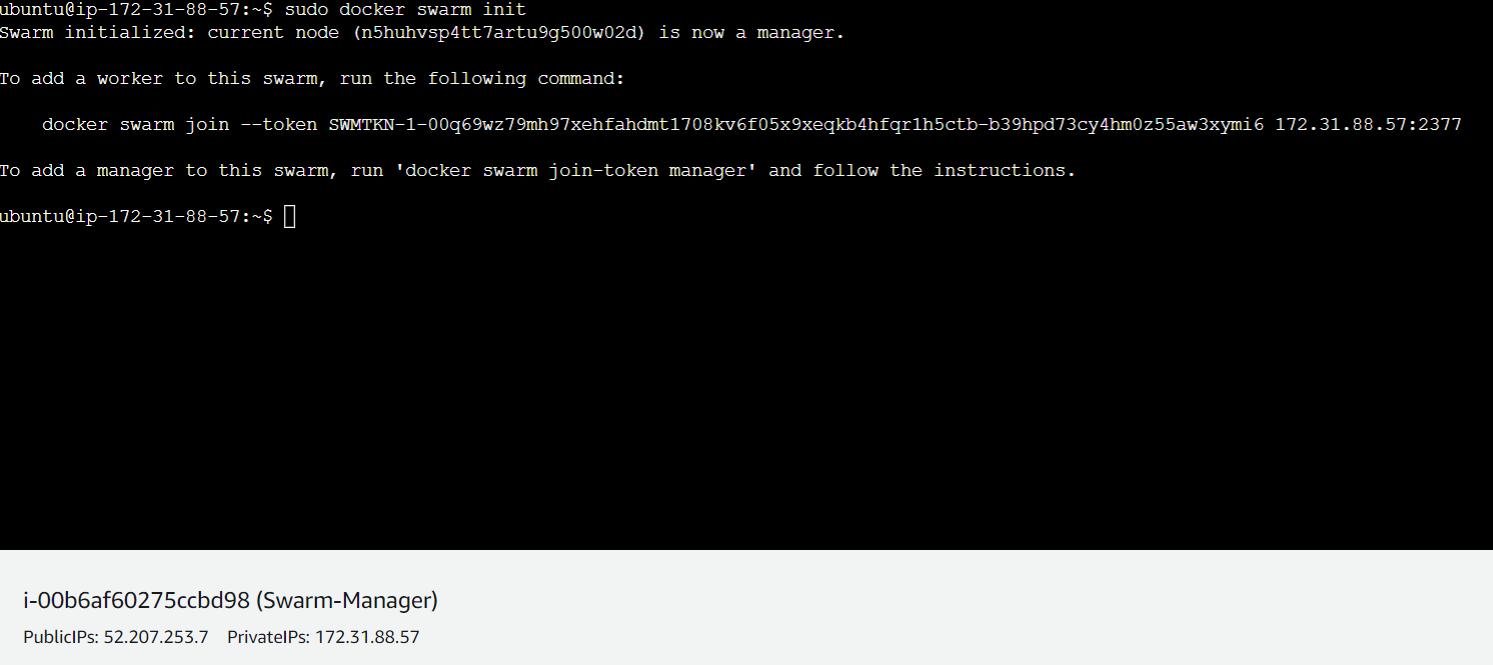

Step-2(Initiating Swarm)

To make the Swarm-Manager instance function as a manager, we need to initiate a docker swarm. Without initiating a swarm, it is just an ordinary instance and can't work as Swarm Manager.

sudo docker swarm init this command is used to initiate an empty swarm in the manager instance. No services are running yet in the swarm-manager server.

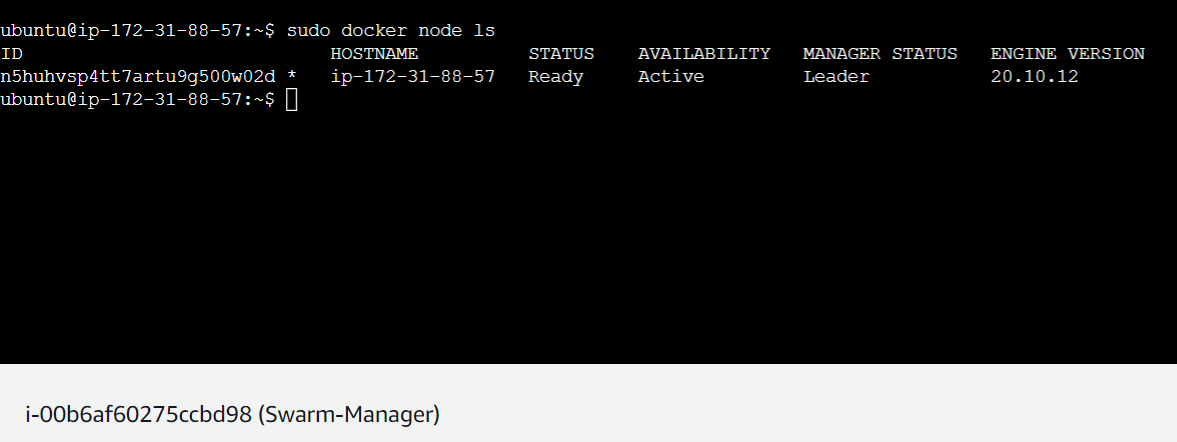

Step-3(Checking Node Status)

sudo docker node ls this command lets us know how many nodes are currently active along with their hostname, status, availability, manager status, engine version. In the below picture you can see currently is only one node available which is the manager(Leader) node itself because until now we have just initiated a swarm in the manager-named server.

NB: In which server swarm is initiated will be stated as Leader.

Manager Status denotes whether it's leader or worker.

Engine Version denotes the version of the docker engine.

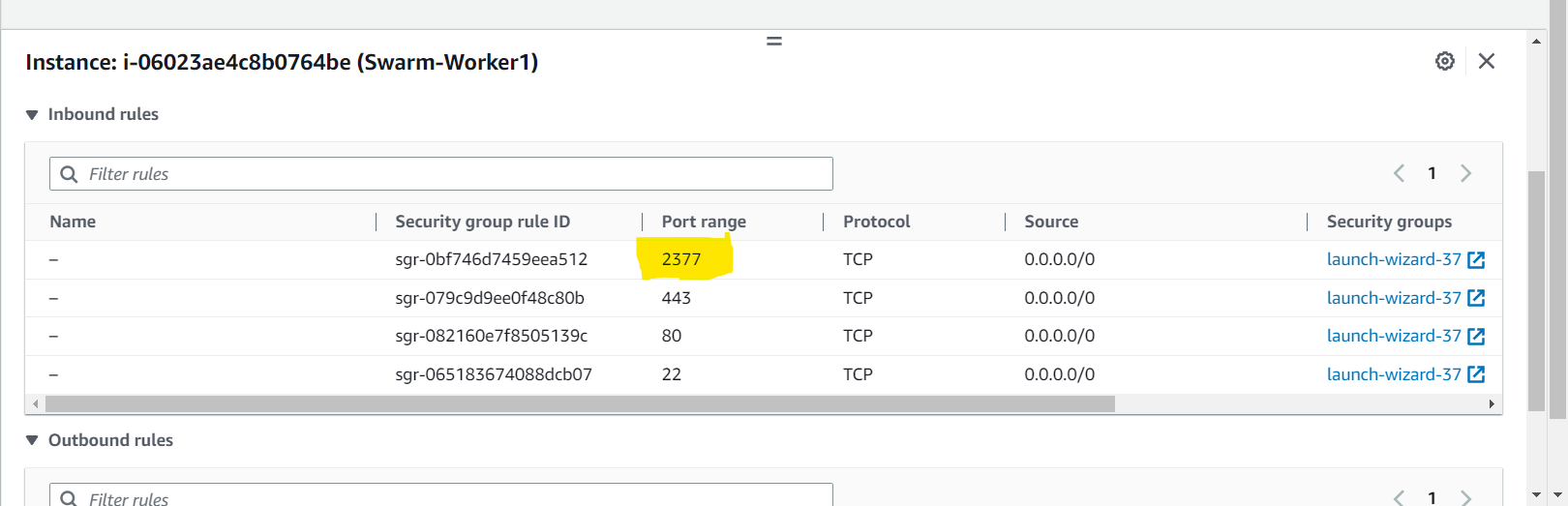

Step-4(Setting inbound rule of worker nodes)

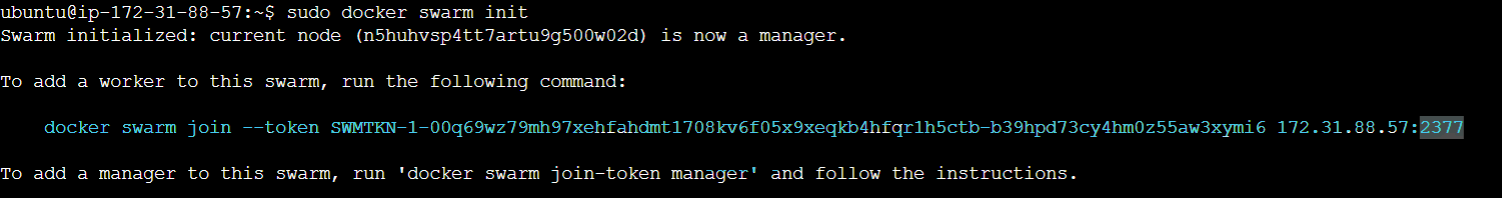

As we saw that we have already created the leader node and worker nodes.

However, the worker nodes are not yet allowed to get connected to the leader node and to do so we need to add port 2377 to each worker node because you can see in the below picture when we initiated the leader node, a token is generated at the port number 2377 via which workers will join the swarm leader.

Now to open port 2377 we need to edit the inbound rule and add a custom TCP port as 2377 in all the worker nodes as below:

Step-5(Joining the Workers with Leader)

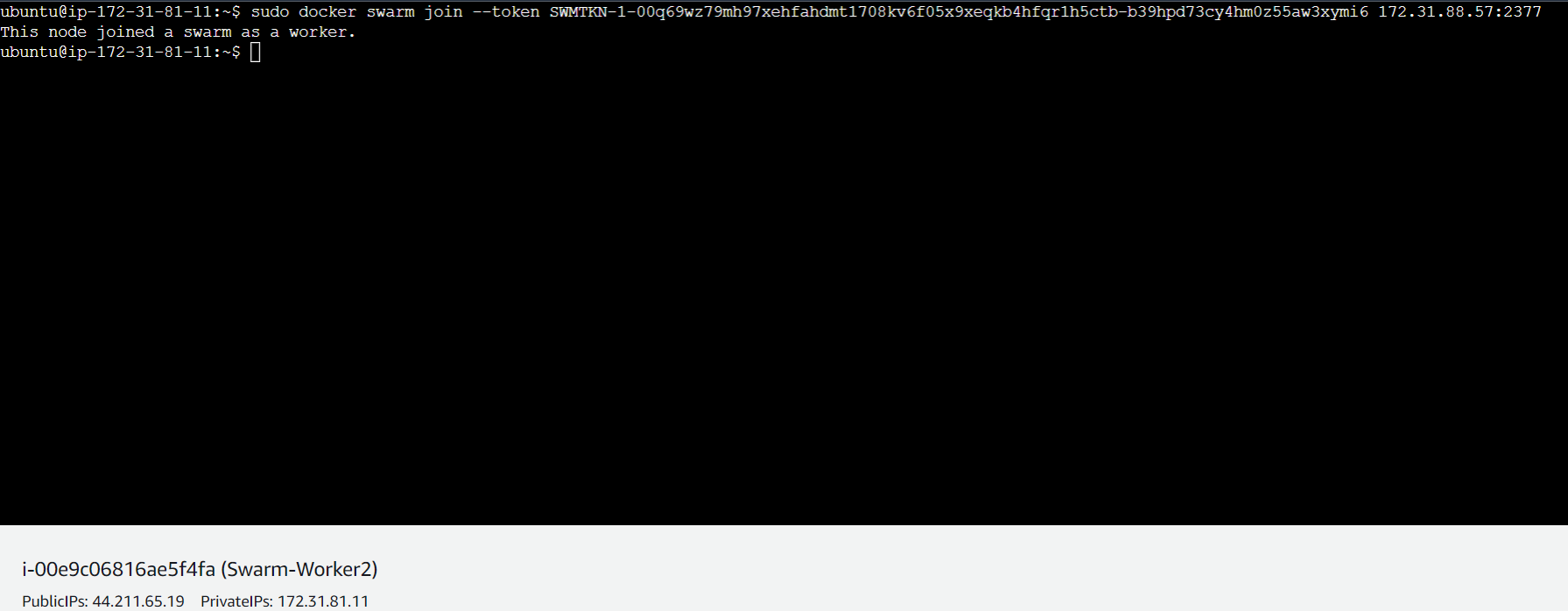

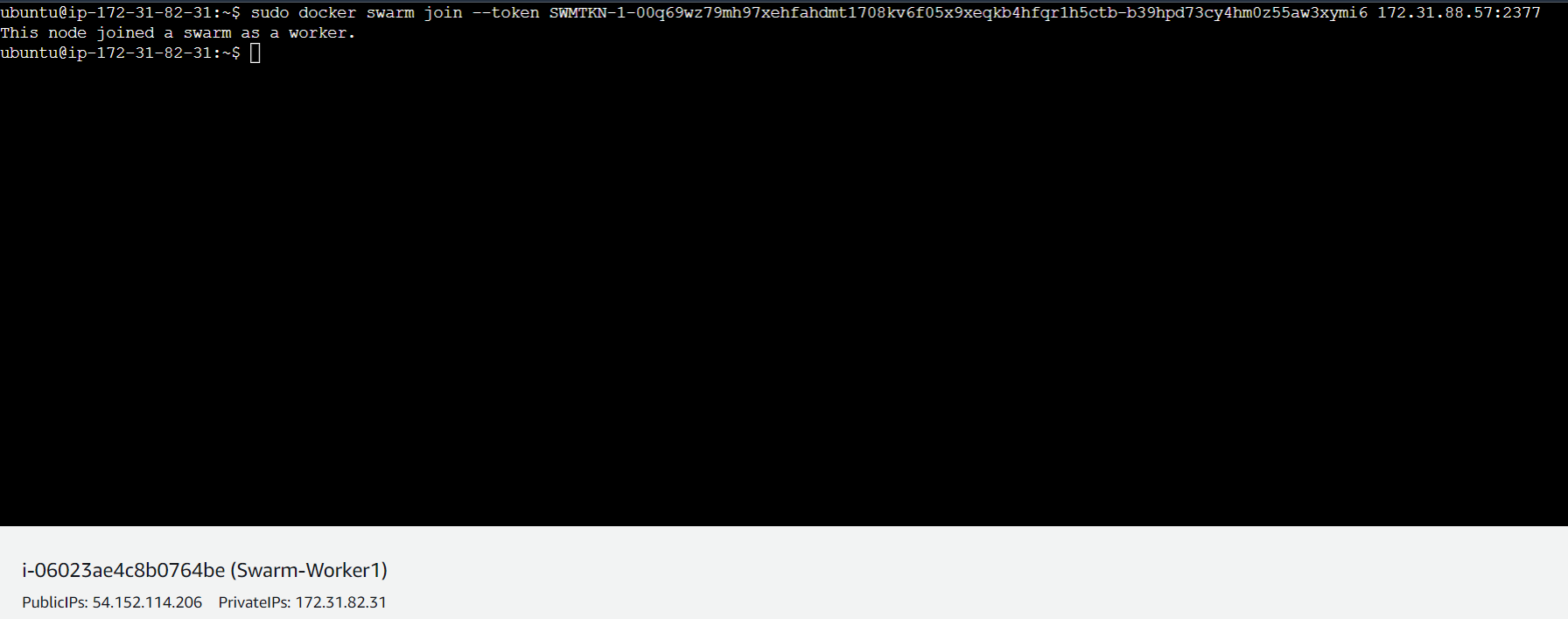

sudo docker swarm join --token SWMTKN-1-00q69wz79mh97xehfahdmt1708kv6f05x9xeqkb4hfqr1h5ctb-b39hpd73cy4hm0z55aw3xymi6 172.31.88.57:2377

docker swarm join followed by --token and generated token(in this case SWMTKN-1-00q69wz79mh97xehfahdmt1708kv6f05x9xeqkb4hfqr1h5ctb-b39hpd73cy4hm0z55aw3xymi6 172.31.88.57:2377 ) is the command that will make the workers join the leader node.

Once you hit the above command in each worker node it will let them join the leader as below.

Now both worker servers have joined the leader.

NB: sudo docker swarm join-token worker can be used to see the token if you missed the token by any chance

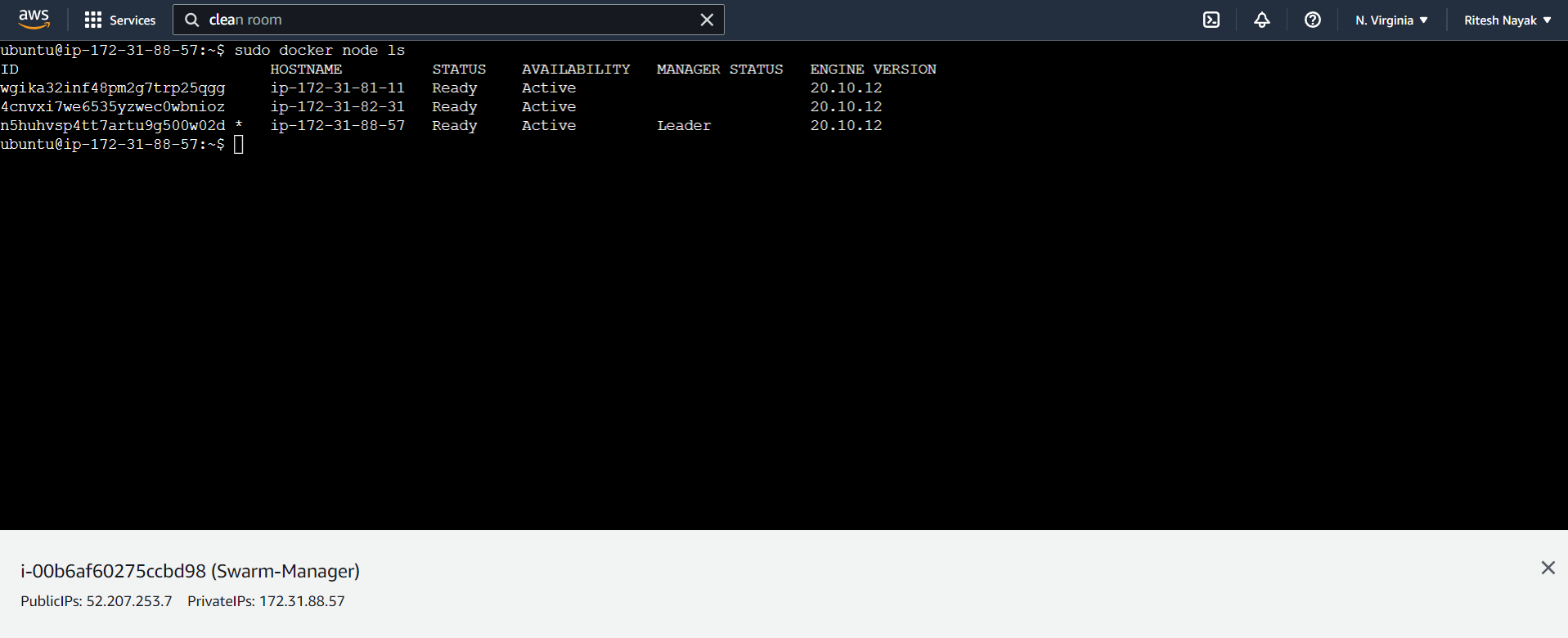

Step-6(Cross checking if workers are added)

Again by commanding sudo docker node ls in the manager node we can see workers are added as below:

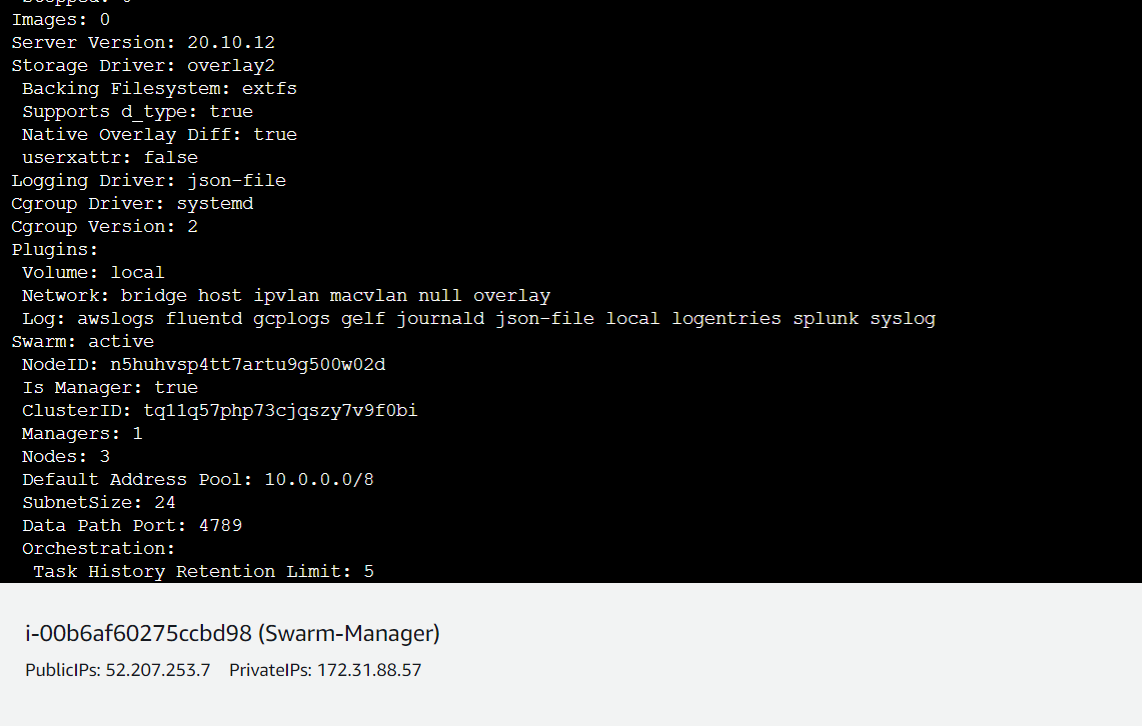

NB: sudo docker info gives you complete information of the docker engine as below:

Above you can see Is Manager: true because I have shown the docker info of the leader node. Similarly, in the worker servers, it will be Is Manager: false .

Step-7(Service Creation for the web app)

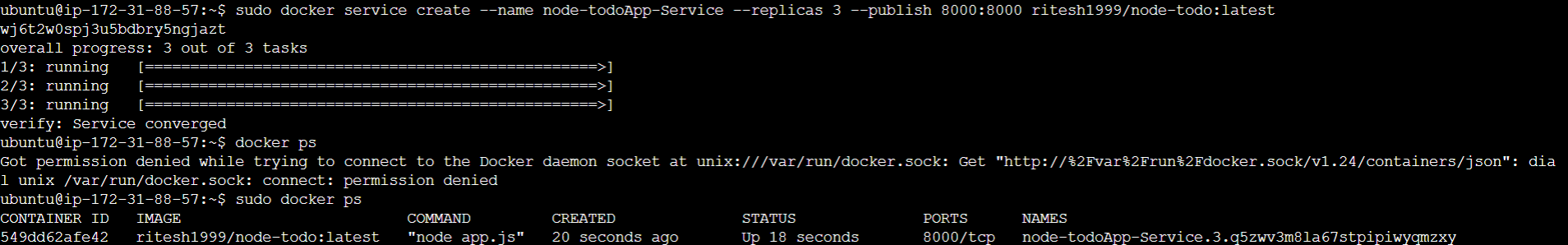

sudo docker service create --name node-todoApp-Service --replicas 3 --publish 8000:8000 ritesh1999/node-todo:latest

Through the above command, we are creating a service named as node-todo-appService with --replicas 3 3 replicas were created for 3 servers including the leader.

Exposing port 8001 by --publish 8000:8000

Now, you can see in the below picture that a service named node-todoApp-Service has been created as well as respective containers

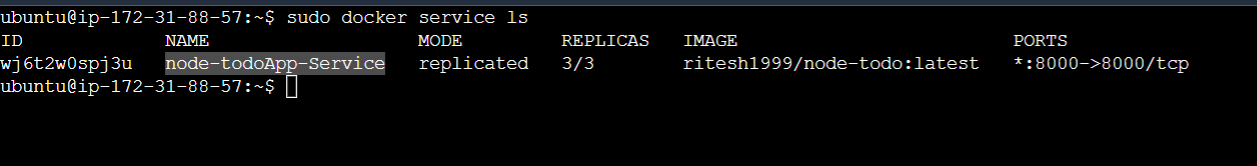

sudo docker service ls gives the number of active services along with their details such as name, mode and replica count in the leader node as below. Here 3/3 replica means that 3 services are created in the linked worker including the leader:

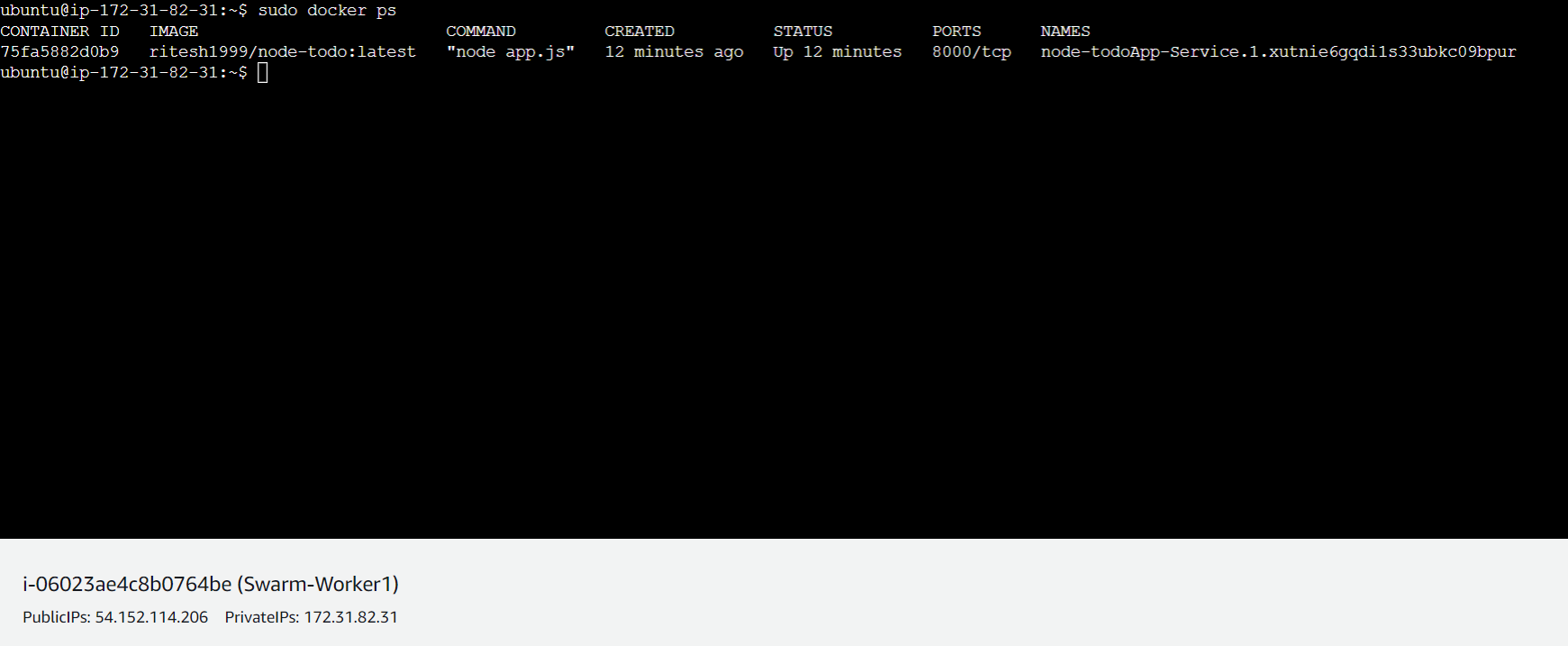

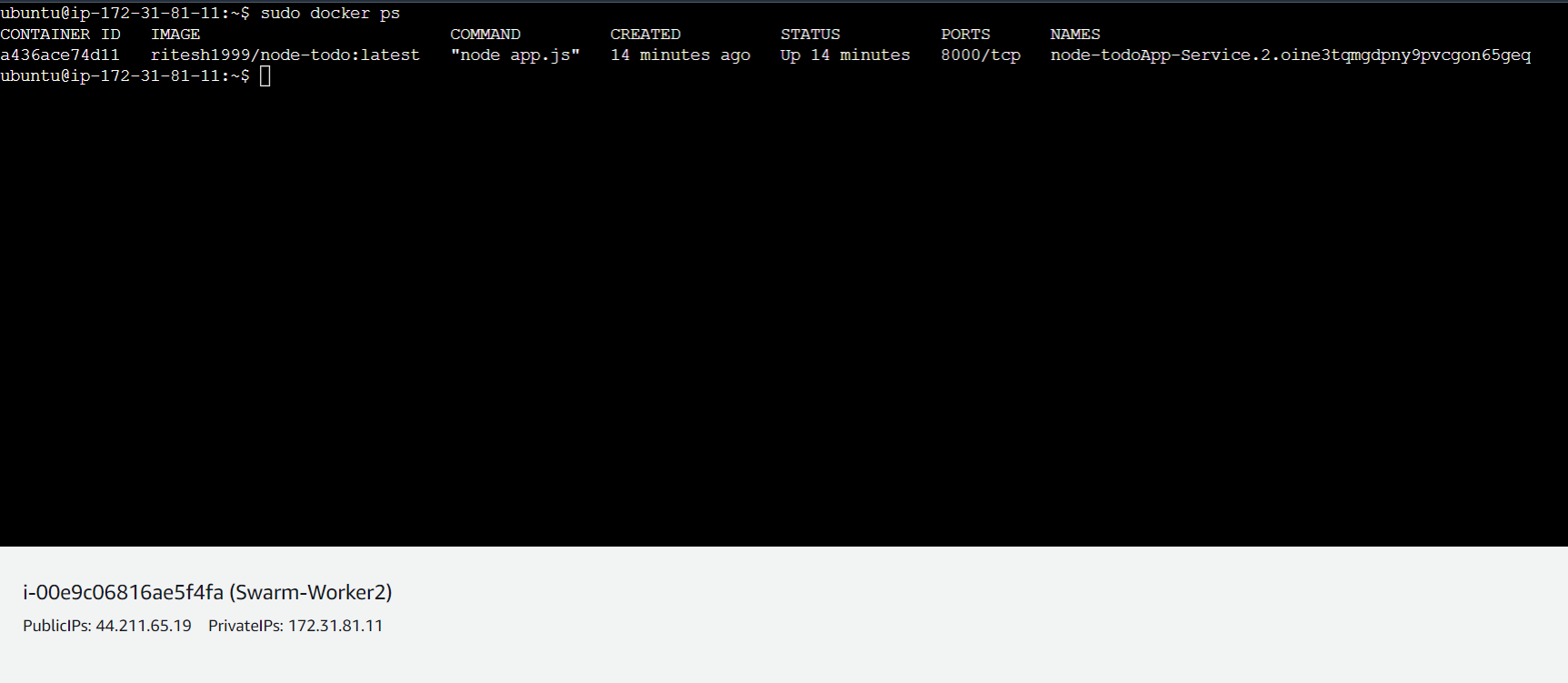

Step-8(Verifying in worker nodes)

Using the command sudo docker ps in the worker nodes, we can see that a container is running with the name node-todoApp-Service

Step-9(Accessing the application from browser)

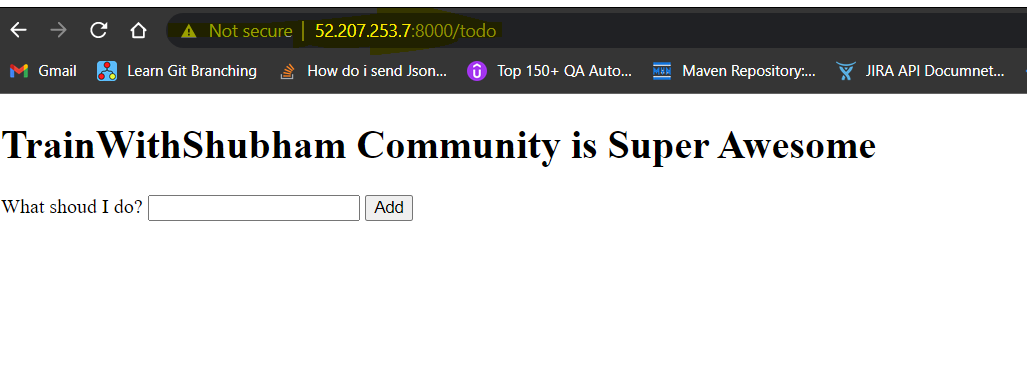

Manager Server:

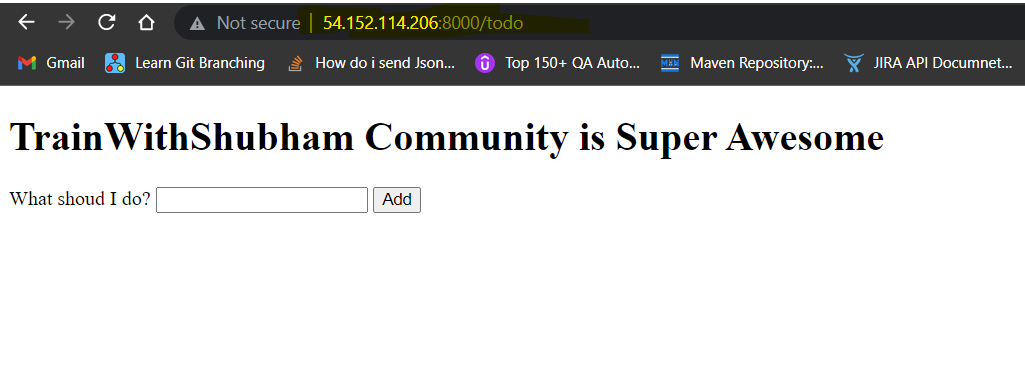

Worker-1 Server:

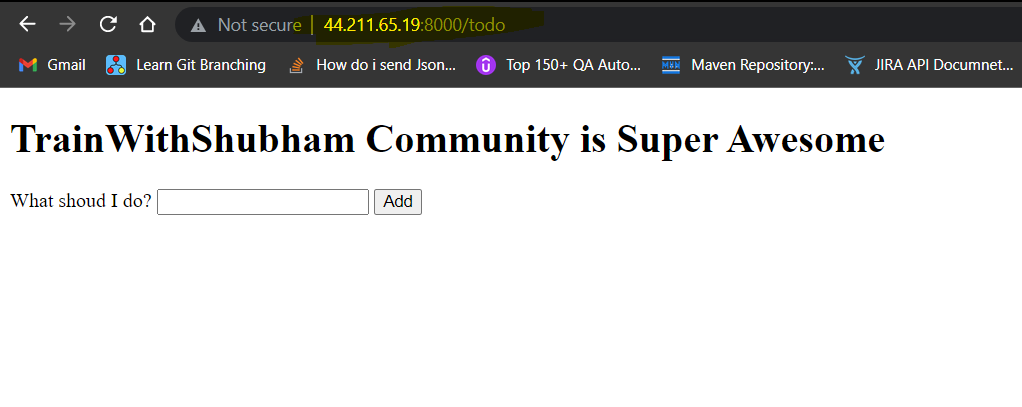

Worker-2 Server

Important: Killing the container doesn't impact it because the service will auto-heal it but Killing the Service impacts it.

Leaving the Swarm

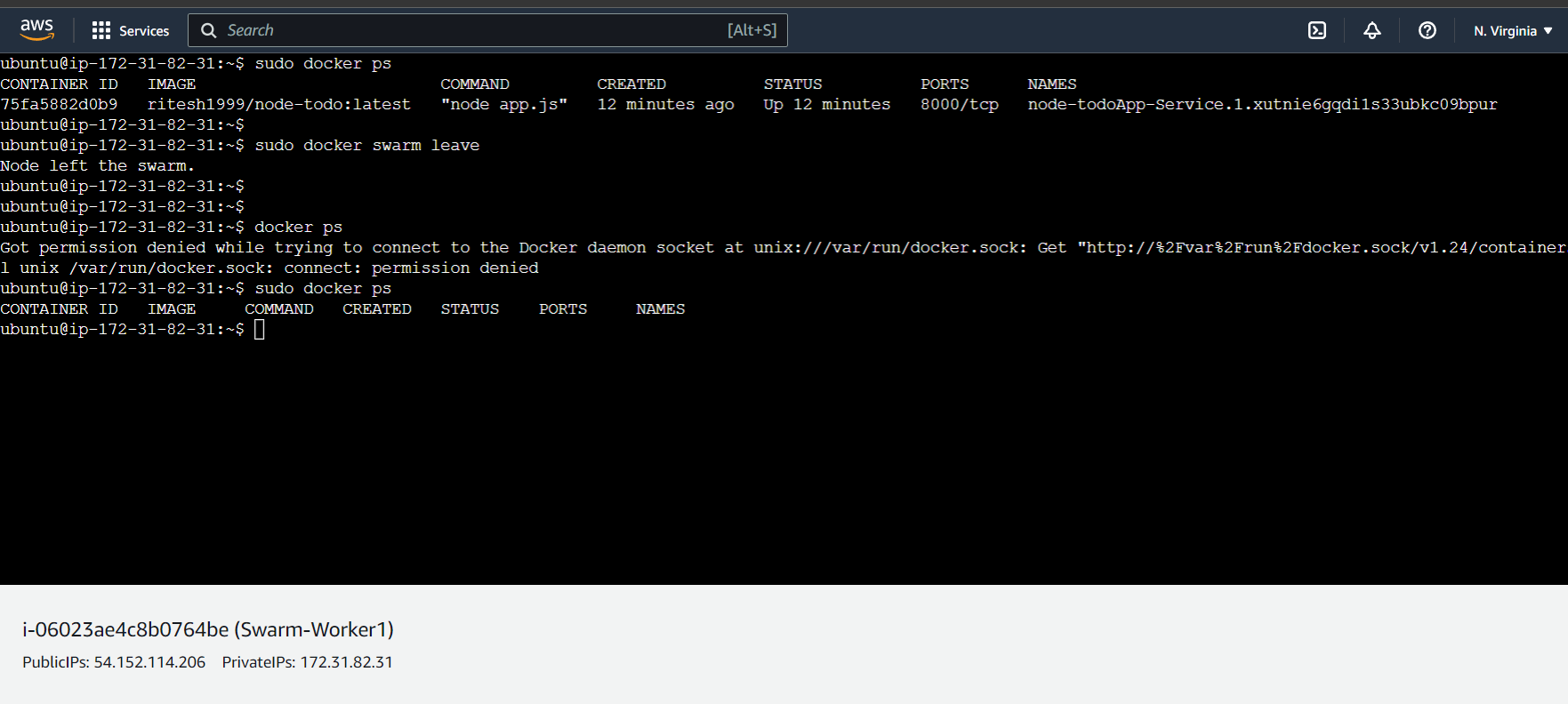

sudo docker swarm leave will detach a node from the swarm along with it all the container and running services will be erased and the app on that particular IP will be terminated.

NB: The leader can remove any worker and the worker can remove by itslef.

Worker-1 Leaving the Swarm

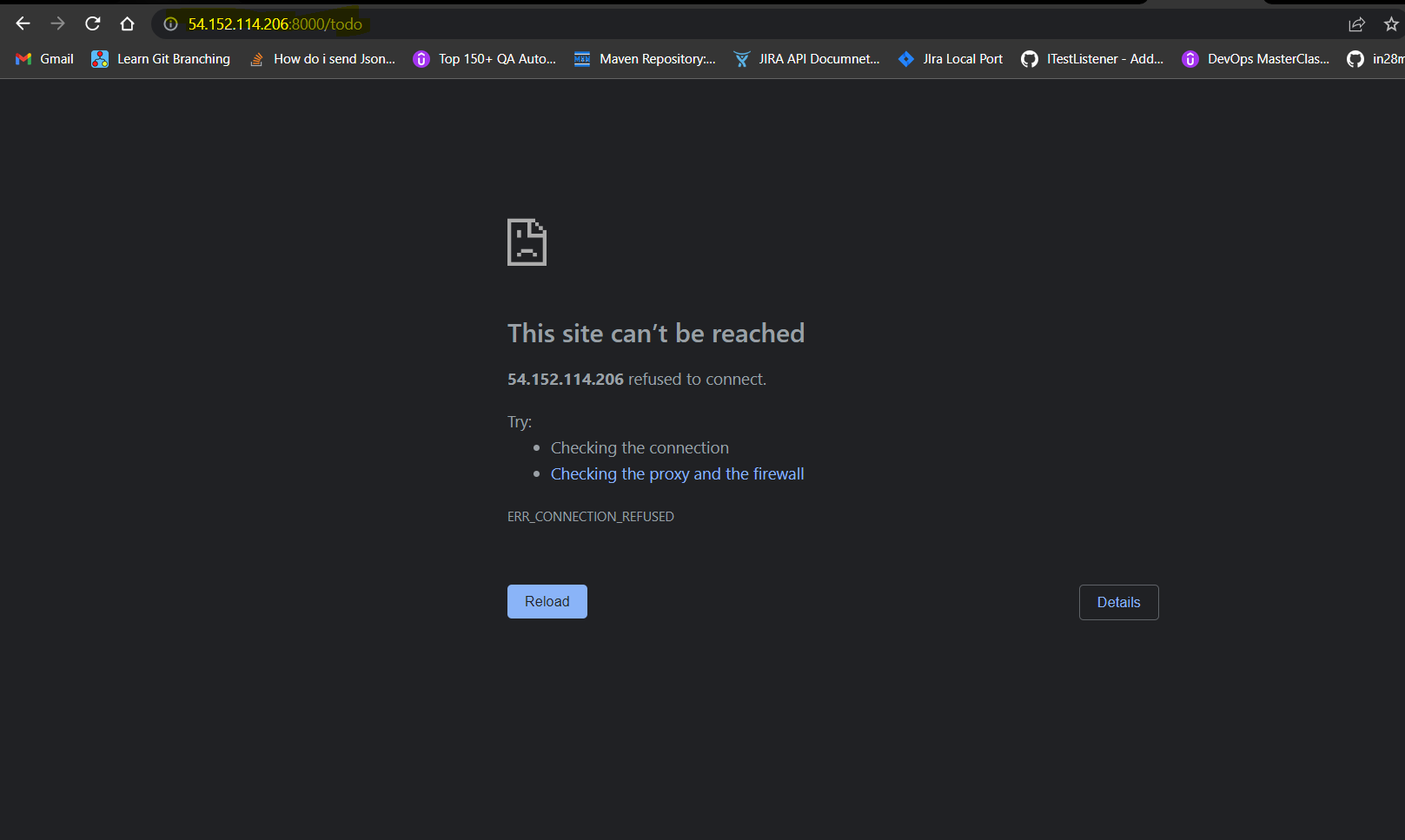

Application on the IP 54.152.114.206 will shut down

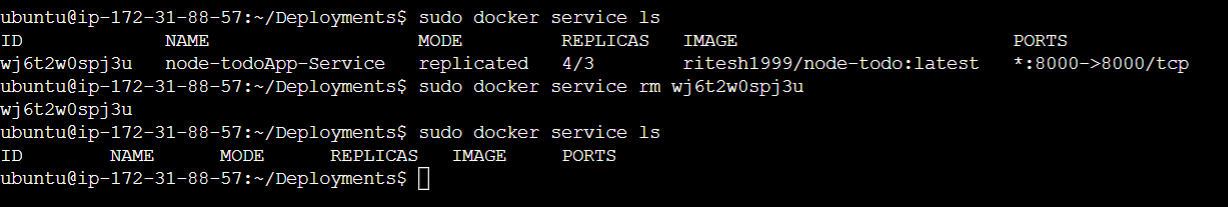

Removing the Service

sudo docker service rm wj6t2w0spj3u(service ID) is used to remove the service which will kill all the containers in all the nodes.

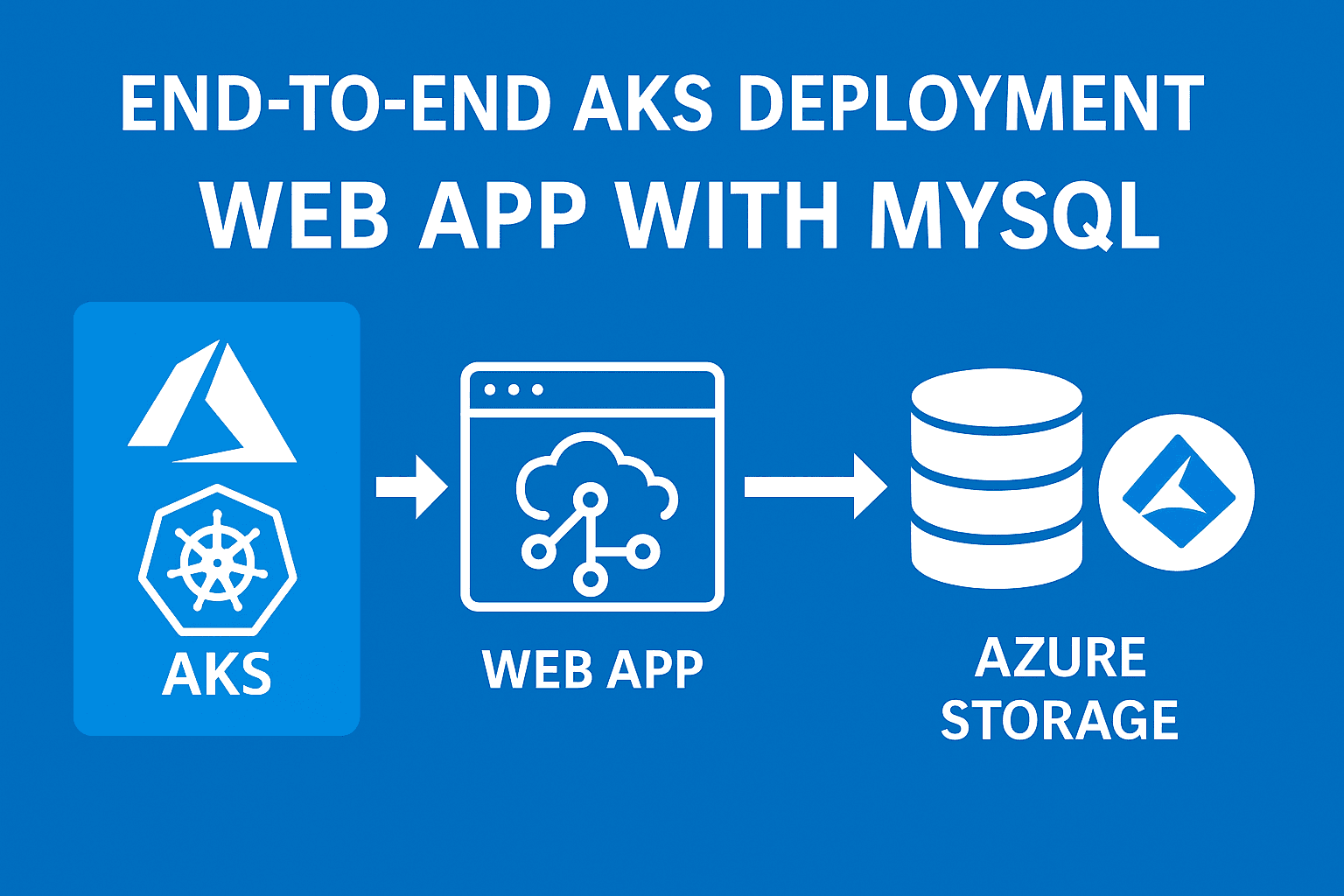

Docker Stack

Docker Stack is a feature in Docker that allows you to deploy and manage multi-container applications using a single command. It uses a Compose file, which is a YAML file that defines the services, networks, and volumes for the application. The docker stack deploy the command is used to deploy the application to a swarm, which is a group of Docker engines that are configured to work together as a single virtual system. This allows you to easily scale and manage the application in a production environment.

Creating the Stack from YAML

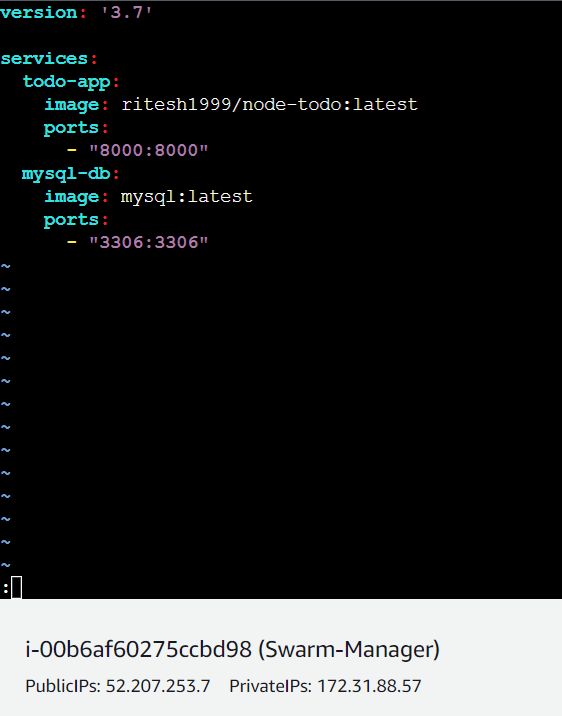

Step-1(Writing a YAML file for deployment configuration)

The below YAML file contains all the details of services, versions and ports that we generally require to run a docker service.

The above YAML file should be stored in the Leader node as the build will start from the leader.

Step-2(Running the deployment file to build the stack)

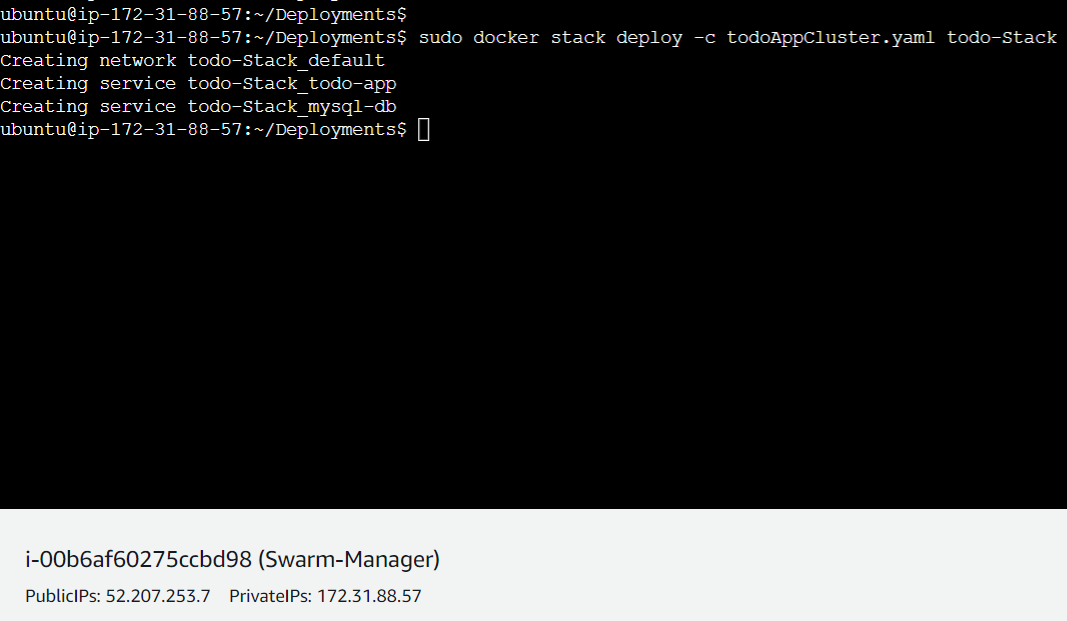

sudo docker stack deploy -c todoAppCluster.yaml todo-Stack this command is used to build the stack from the file

Here todoAppCluster.yaml refers to the deployment configuration file and todo-Stack refers to the name of the stack.

Now, as the above picture shows :

Creating network : it means a connection is being established among the containers to communicate.

Creating service : All the services mentioned in the YAML file such as todo-Stack_todo-app and todo-Stack_mysql-db are being created.

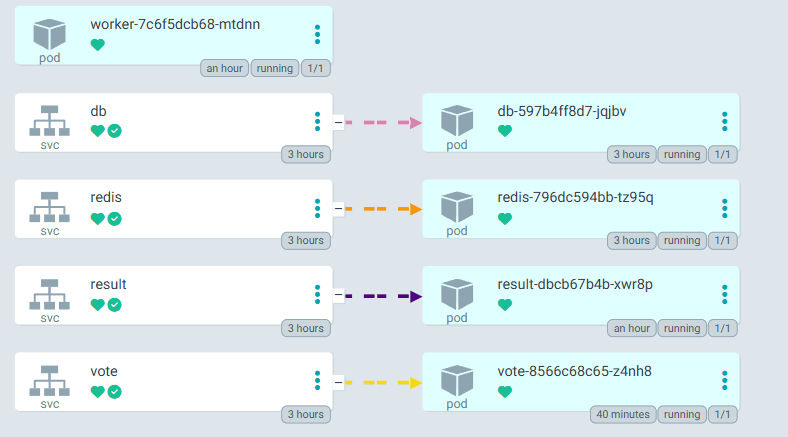

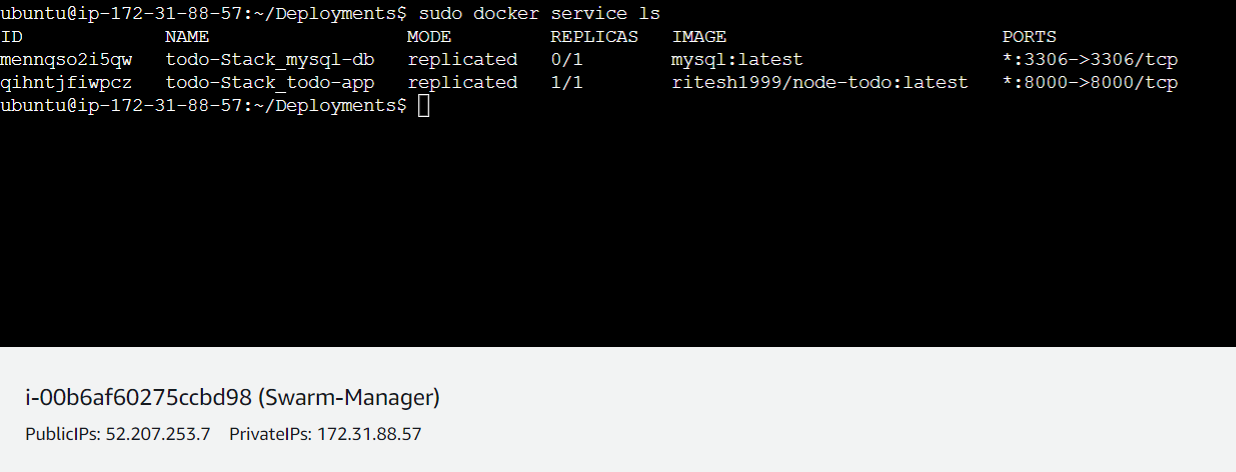

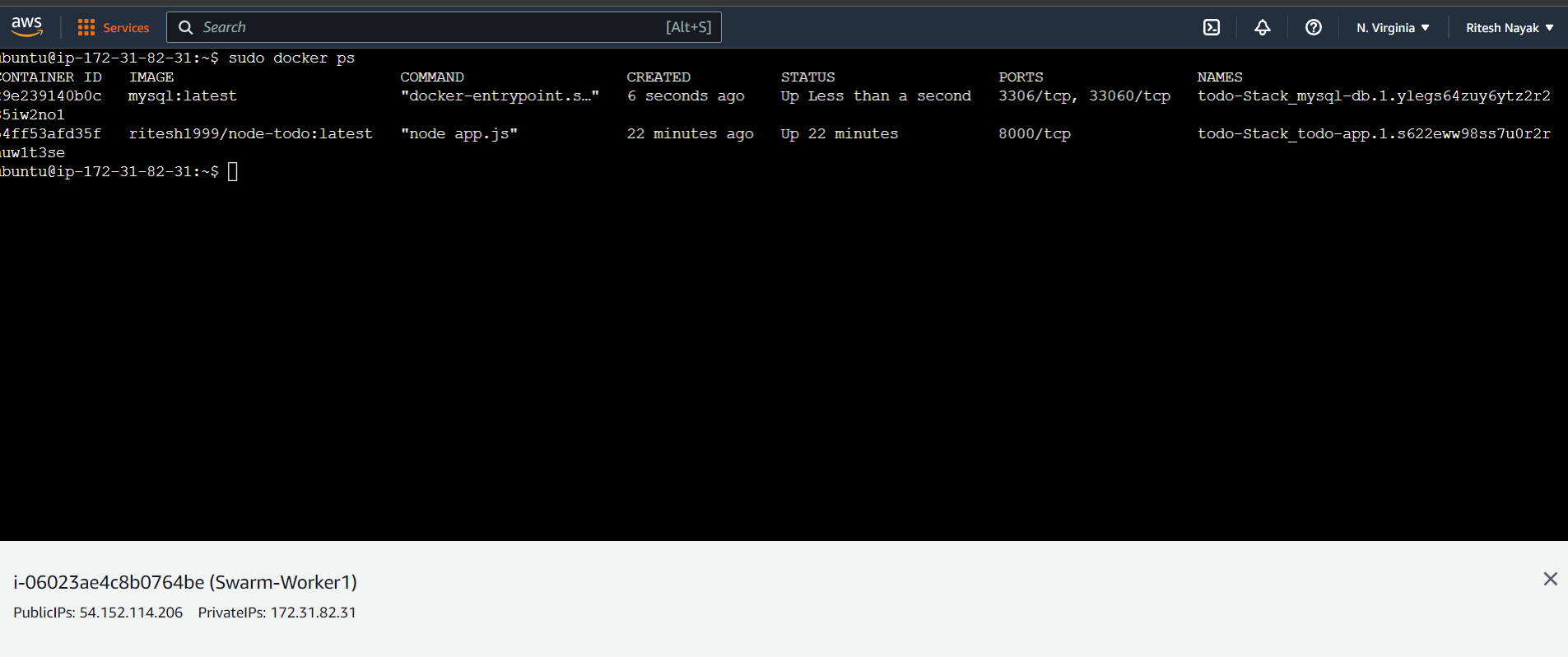

Step-3(Verifying if the list of services)

sudo docker service ls will show the list of services. In the below picture, we can see both the service todo-app and mysql-db have been running successfully.

Step-4(Verifying if the services are running properly)

Below you can see, we ran the YAML in the manager server but the containers are up on worker 1. This is the beauty.

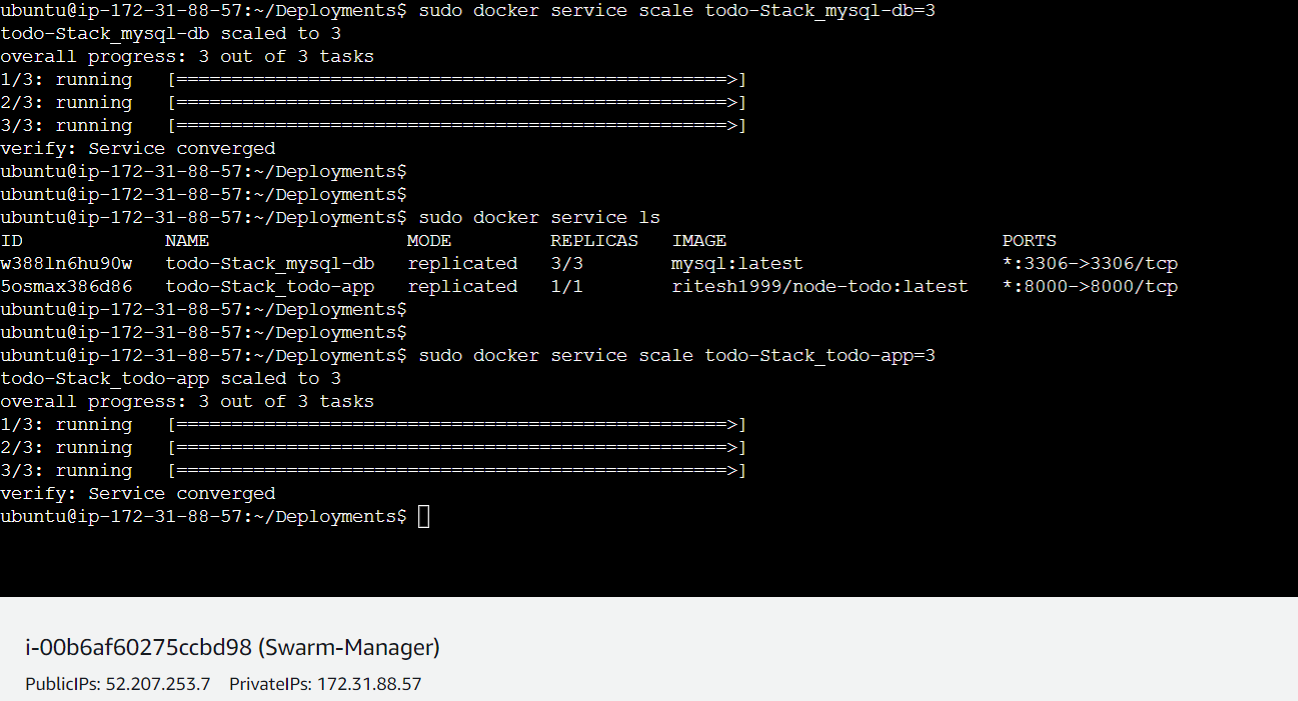

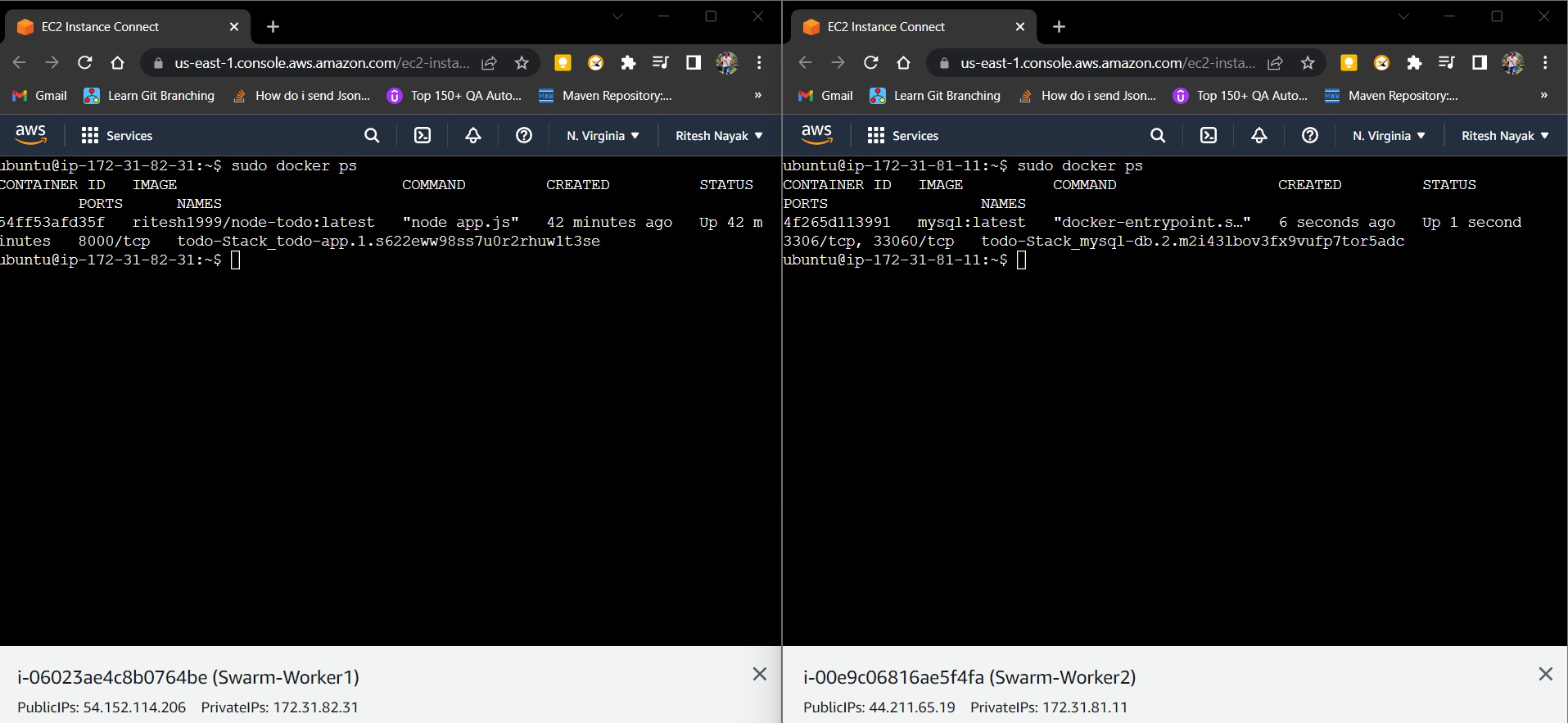

Scaling a particular service(Replicating a particular service)

Let's consider the above scenario, where mysql-db is unable to scale properly as the replicas are not formed.

In some scenarios, if a server is down and we want to scale the service up then or if our customer base increases and we want to scale a service we can do it as follows:

sudo docker service scale todo-Stack_mysql-db=3 helps to scale the sql-db and sudo docker service scale todo-Stack_todo-app=3 helps to scale todo-app. And each service will have 3 replicas.

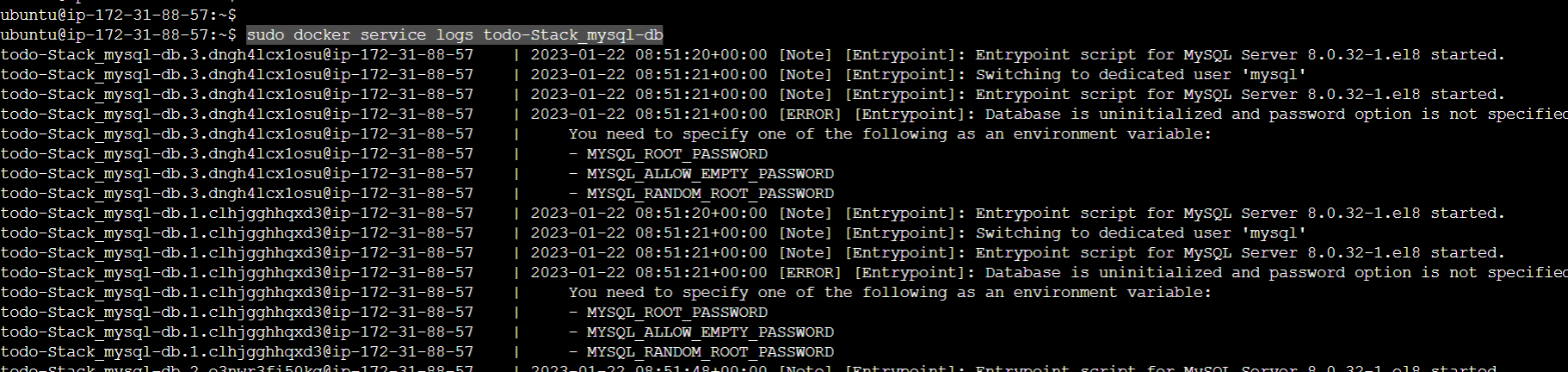

Service Logs

sudo docker service logs todo-Stack_mysql-db(ServiceName) : it gives the logs of a particular service which helps you to debug any issue, provisioning services etc as below where our SQL-db failed due to missing environment variables which we will debug by removing the entire stack going forward.

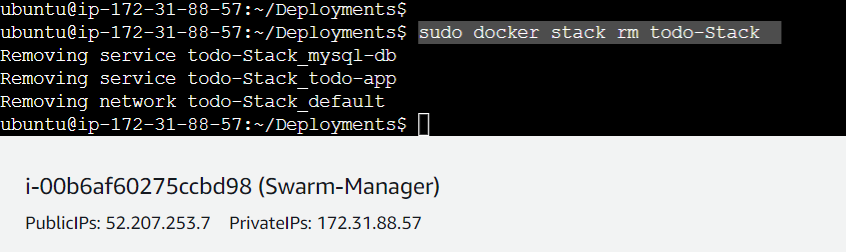

Removing a Stack

sudo docker stack rm todo-Stack : Is used to remove a complete stack which will erase everything that was built with that stack.

Now, if we do sudo docker service ls it will show nothing because all the services has been removed as we removed the stack as below: